JIRA Software 7.6 Long Term Support release performance report

This page compares the performance of JIRA 7.2 and JIRA 7.6 Long Term Support release.

About Long Term Support releases

We recommend upgrading JIRA regularly, however if your organisation's process means you only upgrade about once a year, upgrading to a Long Term Support release may be a good option, as it provides continued access to critical security, stability, data integrity and performance issues until this version reaches end of life.

Performance

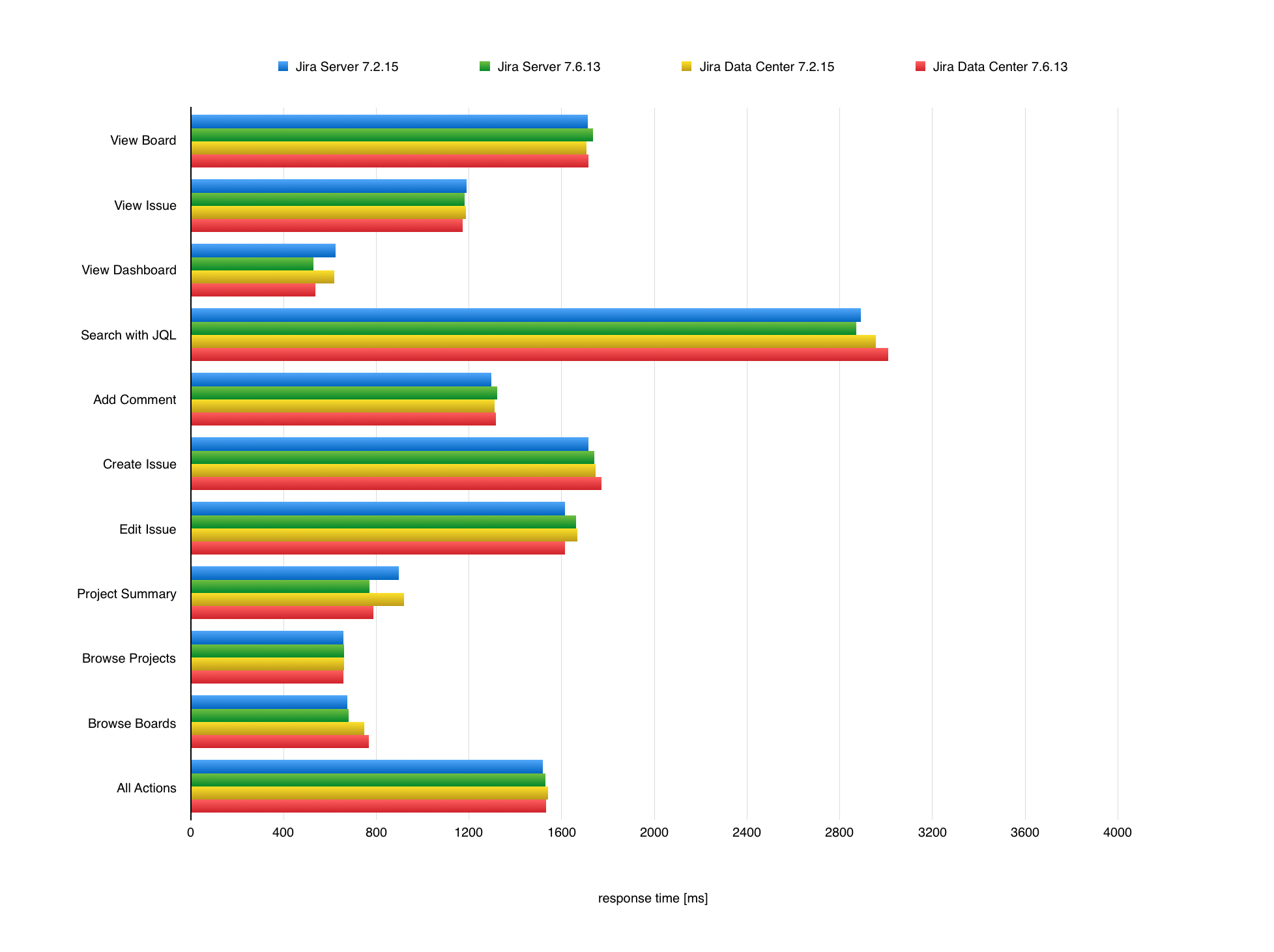

JIRA 7.6 was not focused solely on performance, however we do aim to provide the same, if not better, performance with each release. In this section, we’ll compare JIRA 7.2 to JIRA 7.6 Long Term Support release, both Server and Data Center. We ran the same extensive test scenario for both JIRA versions.

The following chart presents mean response times of individual actions performed in JIRA. To check the details of these actions and the JIRA instance they were performed in, see Testing methodology.

Response times for JIRA actions

Testing methodology

The following sections detail the testing environment, including hardware specification, and methodology we used in our performance tests.