Clustering with Confluence Data Center

Confluence Data Center allows you to run a cluster of multiple Confluence nodes, providing high availability, scalable capacity, and performance at scale.

This guide describes the benefits of clustering, and provides you an overview of what you’ll need to run Confluence in a clustered environment, including infrastructure and hardware requirements.

Ready to get started? See Set up a Confluence Data Center cluster

Is clustering right for my organization?

Clustering is designed for enterprises with large or mission-critical Data Center deployments that require continuous uptime, instant scalability, and performance under high load.

There are a number of benefits to running Confluence in a cluster:

- High availability and failover: If one node in your cluster goes down, the others take on the load, ensuring your users have uninterrupted access to Confluence.

- Performance at scale: each node added to your cluster increases concurrent user capacity, and improves response time as user activity grows.

- Instant scalability: add new nodes to your cluster without downtime or additional licensing fees. Indexes and apps are automatically synced.

- Disaster recovery: deploy an offsite Disaster Recovery system for business continuity, even in the event of a complete system outage. Shared application indexes get you back up and running quickly.

- Rolling upgrade: upgrade to the latest bug fix update of your feature release without any downtime. Apply critical bug fixes and security updates to your site while providing users with uninterrupted access to Confluence.

Clustering architecture

The basics

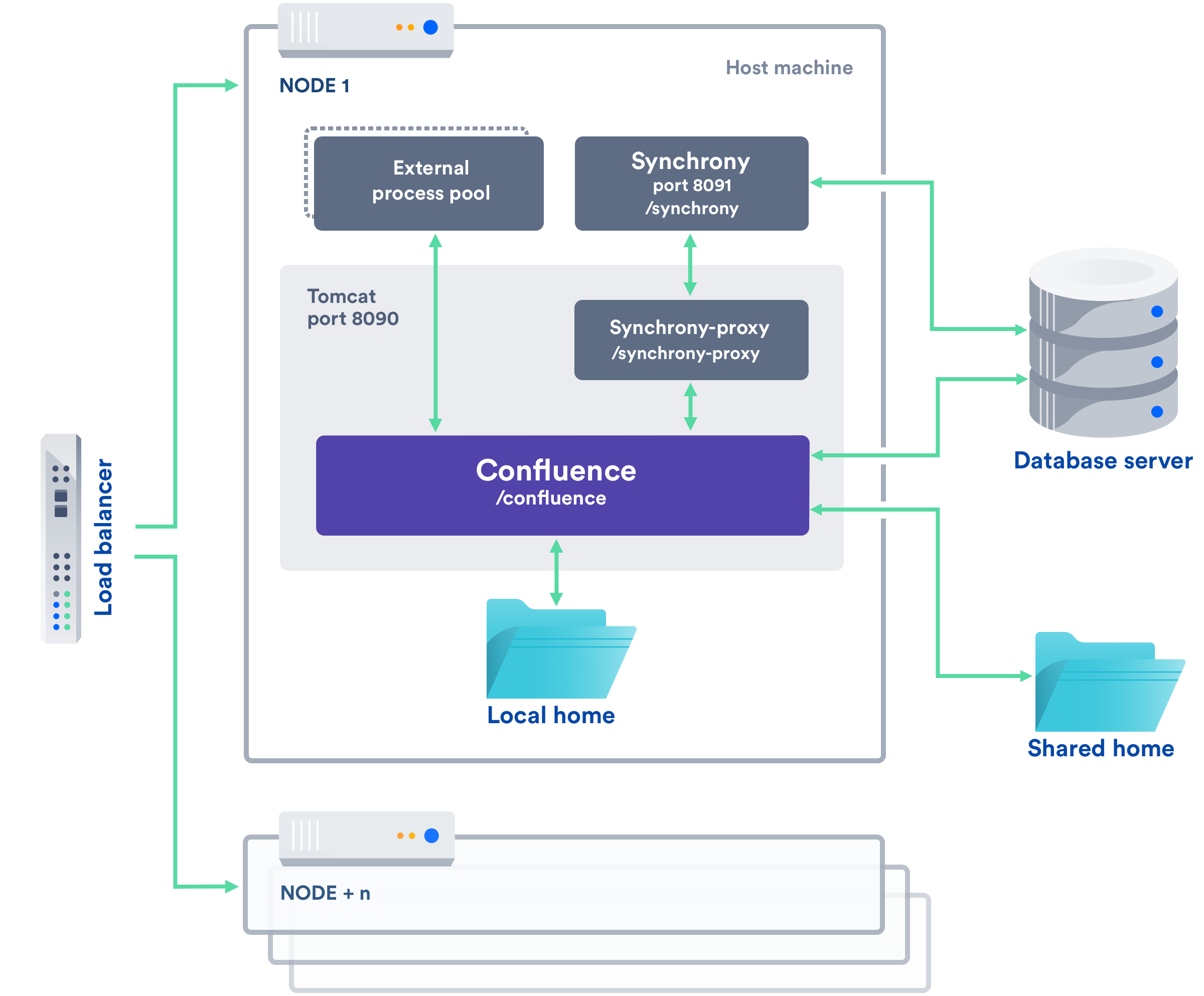

A Confluence Data Center cluster consists of:

- Multiple identical application nodes running Confluence Data Center.

- A load balancer to distribute traffic to all of your application nodes.

- A shared file system that stores attachments, and other shared files.

- A database that all nodes read and write to.

All application nodes are active and process requests. A user will access the same Confluence node for all requests until their session times out, they log out, or a node is removed from the cluster.

The image below shows a typical configuration:

Licensing

Your Data Center license is based on the number of users in your cluster, rather than the number of nodes. This means you can scale your environment without additional licensing fees for new servers or CPU.

You can monitor the available license seats in the License Details page in the admin console.

If you wanted to automate this process (for example to send alerts when you are nearing full allocation) you can use the REST API.

Home directories

To run Confluence in a cluster, you'll need an additional home directory, known as the shared home.

Each Confluence node has a local home that contains logs, caches, Lucene indexes and configuration files. Everything else is stored in the shared home, which is accessible to each Confluence node in the cluster. Marketplace apps can choose whether to store data in the local or shared home, depending on the needs of the app.

Here's a summary of what is found in the local home and shared home:

| Local home | Shared home |

|---|---|

|

|

If you are currently storing attachments in your database you can continue to do so, but this is not available for new installations. Instead, Amazon S3 object storage can be used for storing the attachments.

Caching

When clustered, Confluence uses a combination of local caches, distributed caches, and hybrid caches that are managed using Hazelcast. This allows for better horizontal scalability, and requires less storage and processing power than using only fully replicated caches. See Cache Statistics for more information.

Because of this caching solution, to minimize latency, your nodes should be located in the same physical location, or region (for AWS and Azure).

Indexes

OpenSearch

As Confluence instances grow in size and scale, the default search engine, Lucene, may be slower to index and return search results. To address this, Confluence Data Center now offers an alternative search engine as an opt-in feature — OpenSearch. OpenSearch can take advantage of multi-node instances to manage process-intensive indexing. See Configuring OpenSearch for Confluence for provisioning an OpenSearch Cluster.

Lucene

Each individual Confluence application node stores its own full copy of the index. A journal service keeps each index in sync.

When you first set up your cluster, you will copy the local home directory, including the indexes, from the first node to each new node.

When adding a new Confluence node to an existing cluster, you will copy the local home directory of an existing node to the new node. When you start the new node, Confluence will check if the index is current, and if not, request a recovery snapshot of the index from either the shared home directory, or a running node (with a matching build number) and extract it into the index directory before continuing the start up process. If the snapshot can't be generated or is not received by the new node in time, existing index files will be removed, and Confluence will perform a full re-index.

If a Confluence node is disconnected from the cluster for a short amount of time (hours), it will be able to use the journal service to bring its copy of the index up-to-date when it rejoins the cluster. If a node is down for a significant amount of time (days) its Lucene index will have become stale, and it will request a recovery snapshot from an existing node as part of the node startup process.

If you suspect there is a problem with the index, you can rebuild the index on one node, and Confluence will propagate the new index files to each node in the cluster.

See Content Index Administration for more information on reindexing and index recovery.

Cluster safety mechanism

The ClusterSafetyJob scheduled task runs every 30 seconds in Confluence. In a cluster, this job is run on one Confluence node only. The scheduled task operates on a safety number – a randomly generated number that is stored both in the database and in the distributed cache used across the cluster. The ClusterSafetyJob compares the value in the database with the one in the cache, and if the value differs, Confluence will shut the node down - this is known as cluster split-brain. This safety mechanism is used to ensure your cluster nodes cannot get into an inconsistent state.

If cluster split-brain does occur, you need to ensure proper network connectivity between the clustered nodes. Most likely multicast traffic is being blocked or not routed correctly.

Balancing uptime and data integrity

By changing how often the cluster safety scheduled job runs and the duration of the Hazelcast heartbeat (which controls how long a node can be out of communication before it's removed from the cluster) you can fine tune the balance between uptime and data integrity in your cluster. In most cases the default values will be appropriate, but there are some circumstances where you may decide to trade off data integrity for increased uptime for example.

Cluster locks and event handling

Where an action must only run on one node, for example a scheduled job or sending daily email notifications, Confluence uses a cluster lock to ensure the action is only performed on one node.

Similarly, some actions need to be performed on one node, and then published to others. Event handling ensures that Confluence only publishes cluster events when the current transaction is committed and complete. This is to ensure that any data stored in the database will be available to other instances in the cluster when the event is received and processed. Event broadcasting is done only for certain events, like enabling or disabling an app.

Cluster node discovery

When configuring your cluster nodes you can either supply the IP address of each cluster node, or a multicast address.

If you're using multicast:

Confluence will broadcast a join request on the multicast network address. Confluence must be able to open a UDP port on this multicast address, or it won't be able to find the other cluster nodes. Once the nodes are discovered, each responds with a unicast (normal) IP address and port where it can be contacted for cache updates. Confluence must be able to open a UDP port for regular communication with the other nodes.

A multicast address can be auto-generated from the cluster name, or you can enter your own, during the set-up of the first node.

Infrastructure and hardware requirements

The choice of hardware and infrastructure is up to you. Below are some areas to think about when planning your hardware and infrastructure requirements.

AWS EKS deployment option

If you plan to run Confluence Data Center on AWS, you can deploy using our Helm charts on a managed Kubernetes cluster service. See Running Confluence Data Center in AWS.

AWS Quick Start deployment option

The AWS Quick Start template as a method of deployment is no longer supported by Atlassian. You can still use the template, but we won't maintain or update it.

If you plan to run Confluence Data Center on AWS, a Quick Start is available to help you deploy Confluence Data Center in a new or existing Virtual Private Cloud (VPC). You'll get your Confluence and Synchrony nodes, Amazon RDS PostgreSQL database and application load balancer all configured and ready to use in minutes. If you're new to AWS, the step-by-step Quick Start Guide will assist you through the whole process.

Confluence can only be deployed in a region that supports Amazon Elastic File System (EFS). See Running Confluence Data Center in AWS for more information.

It is worth noting that if you deploy Confluence using the Quick Start, it will use the Java Runtime Engine (JRE) that is bundled with Confluence (/opt/atlassian/confluence/jre/), and not the JRE that is installed on the EC2 instances (/usr/lib/jvm/jre/).

Server requirements

You should not run additional applications (other than core operating system services) on the same servers as Confluence. Running Confluence, Jira and Bamboo on a dedicated Atlassian software server works well for small installations but is discouraged when running at scale.

Confluence Data Center can be run successfully on virtual machines. If you plan to use multicast, you can't run Confluence Data Center in Amazon Web Services (AWS) environments as AWS doesn't support multicast traffic.

Cluster nodes

Each node does not need to be identical, but for consistent performance we recommend they are as close as possible. All cluster nodes must:

- be located in the same data center, or region (for AWS and Azure)

- run the same Confluence version on each Confluence node (except during a rolling upgrade)

- run the same Synchrony version on each Synchrony node (if not using managed Synchrony)

- have the same OS, Java and application server version

- have the same memory configuration (both the JVM and the physical memory) (recommended)

- be configured with the same time zone (and keep the current time synchronized). Using ntpd or a similar service is a good way to ensure this.

You must ensure the clocks on your nodes don't diverge, as it can result in a range of problems with your cluster.

How many nodes?

Your Data Center license does not restrict the number of nodes in your cluster. The right number of nodes depends on the size and shape of your Confluence site, and the size of your nodes. See our Confluence Data Center load profiles guide for help sizing your instance. In general, we recommend starting small and growing as you need.

Memory requirements

Confluence nodes

We recommend that each Confluence node has a minimum of 10GB of RAM. A high number of concurrent users means that a lot of RAM will be consumed.

Here's some examples of how memory may be allocated on different sized machines:

| RAM | Breakdown for each Confluence node |

|---|---|

| 10GB |

|

| 16GB |

|

The maximum heap (-Xmx) for the Confluence application is set in the setenv.sh or setenv.bat file. The default should be increased for Data Center. We recommend keeping the minimum (Xms) and maximum (Xmx) heap the same value.

The external process pool is used to externalise memory intensive tasks, to minimise the impact on individual Confluence nodes. The processes are managed by Confluence. The maximum heap for each process (sandbox) (-Xmx), and number of processes in the pool, is set using system properties. In most cases the default settings will be adequate, and you don't need to do anything.

Standalone Synchrony cluster nodes

Synchrony is required for collaborative editing. By default, it is managed by Confluence, but you can choose to run Synchrony in its own cluster. See Possible Confluence and Synchrony Configurations for more information on the choices available.

If you do choose to run your own Synchrony cluster, we recommend allowing 2GB memory for standalone Synchrony. Here's an example of how memory could be allocated on a dedicated Synchrony node.

| Physical RAM | Breakdown for each Synchrony node |

|---|---|

4GB |

|

Database

The most important requirement for the cluster database is that it have sufficient connections available to support the number of nodes.

For example, if:

- each Confluence node has a maximum pool size of 20 connections

- each Synchrony node has a maximum pool size of 15 connections (the default)

- you plan to run 3 Confluence nodes and 3 Synchrony nodes

your database server must allow at least 105 connections to the Confluence database. In practice, you may require more than the minimum for debugging or administrative purposes.

You should also ensure your intended database is listed in the current Supported Platforms. The load on an average cluster solution is higher than on a standalone installation, so it is crucial to use the a supported database.

You must also use a supported database driver. Collaborative editing will fail with an error if you're using an unsupported or custom JDBC driver (or driverClassName in the case of a JNDI datasource connection). See Database JDBC Drivers for the list of drivers we support.

Additional requirements for database high availability

Running Confluence Data Center in a cluster removes the application server as a single point of failure. You can also do this for the database through the following supported configurations:

Amazon RDS Multi-AZ: this database setup features a primary database that replicates to a standby in a different availability zone. If the primary goes down, the standby takes its place.

Amazon PostgreSQL-Compatible Aurora: this is a cluster featuring a database node replicating to one or more readers (preferably in a different availability zone). If the writer goes down, Aurora will promote one of the writers to take its place. If you want to set up an Amazon Aurora cluster with an existing Confluence Data Center instance, refer to Configuring Confluence Data Center to work with Amazon Aurora.

- Pgpool-II: this is a a high-availability (HA) database solution based on Postgres. See Database Setup for Pgpool-II for configuring Confluence to use this.

Shared home directory and storage requirements

All Confluence cluster nodes must have access to a shared directory in the same path. NFS and SMB/CIFS shares are supported as the locations of the shared directory. As this directory will contain large amount of data (including attachments and backups) it should be generously sized, and you should have a plan for how to increase the available disk space when required.

Remember me and session timeout

The 'remember me' option is enforced by default in a cluster. Users won't see the 'remember me' checkbox on the login page, and their session will be shared between nodes. See the following knowledge base articles if you need to change this, or change the session timeout.

- How to configure the 'Remember Me' feature in Confluence

- How to adjust the session timeout for Confluence

Load balancers

We suggest using the load balancer you are most familiar with. The load balancer needs to support ‘session affinity’ and WebSockets. This is required for both Confluence and Synchrony. If you're deploying on AWS you'll need to use an Application Load Balancer (ALB).

Here are some recommendations when configuring your load balancer:

- Queue requests at the load balancer. By making sure the maximum number requests served to a node does not exceed the total number of http threads that Tomcat can accept, you can avoid overwhelming a node with more requests than it can handle. You can check the maxThreads in

<install-directory>/conf/server.xml. - Don't replay failed idempotent requests on other nodes, as this can propagate problems across all your nodes very quickly.

- Using least connections as the load balancing method, rather than round robin, can better balance the load when a node joins the cluster or rejoins after being removed.

Many load balancers require a URL to constantly check the health of their backends in order to automatically remove them from the pool. It's important to use a stable and fast URL for this, but lightweight enough to not consume unnecessary resources. The following URL returns Confluence's status and can be used for this purpose.

URL | Expected content | Expected HTTP Status |

|---|---|---|

| http://<confluenceurl>/status | {"state":"RUNNING"} | 200 OK |

Here are some recommendations, when setting up monitoring, that can help a node survive small problems, such as a long GC pause:

- Wait for two consecutive failures before removing a node.

- Allow existing connections to the node to finish, for say 30 seconds, before the node is removed from the pool.

Network adapters

Use separate network adapters for communication between servers. Cluster nodes should have a separate physical network (i.e. separate NICs) for inter-server communication. This is the best way to get the cluster to run fast and reliably. Performance problems are likely to occur if you connect cluster nodes via a network that has lots of other data streaming through it.

Additional requirements for collaborative editing

Collaborative editing in Confluence 6.0 and later is powered by Synchrony, which runs as a seperate process.

If you have a Confluence Data Center license, two methods are available for running Synchrony:

- managed by Confluence (recommended)

Confluence will automatically launch a Synchrony process on the same node, and manage it for you. No manual setup is required. - Standalone Synchrony cluster (managed by you)

You deploy and manage Synchrony standalone in its own cluster with as many nodes as you need. Significant setup is required. During a rolling upgrade, you'll need to upgrade the Synchrony separately from the Confluence cluster.

If you want simple setup and maintenance, we recommend allowing Confluence to manage Synchrony for you. If you want full control, or if making sure the editor is highly available is essential, then managing Synchrony in its own cluster may be the right solution for your organisation.

App compatibility

The process for installing Marketplace apps (also known as add-ons or plugins) in a Confluence cluster is the same as for a standalone installation. You will not need to stop the cluster, or bring down any nodes to install or update an app.

The Atlassian Marketplace indicates apps that are compatible with Confluence Data Center.

If you have developed your own plugins (apps) for Confluence you should refer to our developer documentation on How do I ensure my app works properly in a cluster? to find out how you can confirm your app is cluster compatible.

Ready to get started?

Head to Set up a Confluence Data Center cluster for a step-by-step guide to enabling and configuring your cluster.